Image-based laser point cloud building facade structure extraction method by considering semantic information

Basic idea and overall design

2D image obtains precise topological relationships and abundant texture information, semantic information aids in delineating structural boundaries, and 3D laser point clouds provide accurate coordinate information. The complementary advantages of the aforementioned data can potentially improve the accuracy of extracting building facade structures from building 3D LPCD. However, existing methods suffer from suboptimal extraction outcomes due to the lack of a suitable data model that integrates 2D images, semantic information, and 3D laser point clouds. This limitation hampers the ability to fully exploit the potential offered by semantic, textural, and spatial information. Furthermore, building structures exhibit prominent morphological characteristics that can be leveraged to optimize the extracted facade structure line segments. Therefore, developing a data model that incorporates semantics, 2D images, and 3D laser point clouds is anticipated to improve the accuracy of building facade extraction.

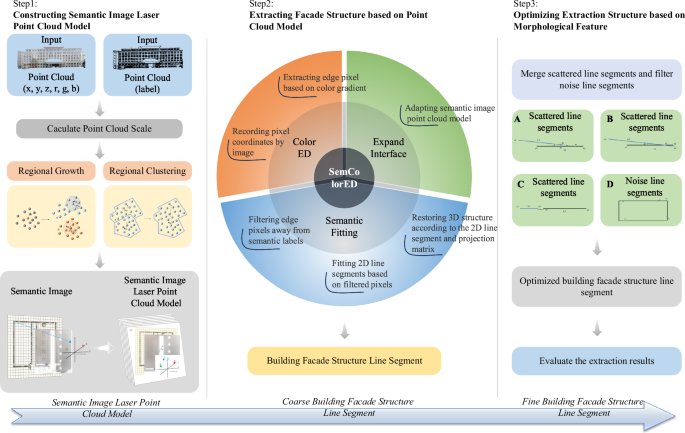

The proposed method mainly involves three steps (Fig. 1). (1). Constructing SILPC model. After determining the scale of the building 3D LPCD, the input point clouds are segmented and clustered based on region-growing strategies. Semantic images are generated from the LPCD of respective planes to construct the comprehensive SILPC model. (2). Extracting facade structure based on the point cloud model. Based on the constructed SILPC data model, the SemColorED method is proposed for extracting building 3D facade structures. 3D facade structures are restored from 2D line segments after filtering non-conforming edge pixels, considering the assigned semantic labels. (3). Optimizing extraction structure based on morphological features. To make the structural lines complete and accurate, an optimization strategy is proposed based on the morphological characteristics of building facade structures.

The figure systematically delineates the sequential operations, including data acquisition, preprocessing, feature extraction, algorithmic processing, and outcome determination.

SILPC model construction

The integration of semantic information, 2D images, and 3D laser point clouds holds the promising potential to achieve effective complementarity among one another, thereby mitigating the influence of point cloud texture and noise. Therefore, constructing the SILPC model is crucial for the accurate extraction of building facades, extending from our recently proposed image-based LPCD model19. The semantic information utilized in this study is obtained by our recently proposed improved CANUPO method in 202423.

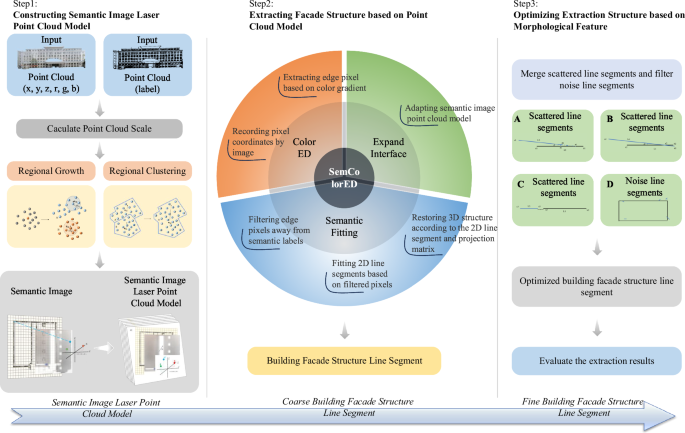

A concept called point cloud scale \({P}_{{Scale}}\), is proposed to achieve an accurate and objective construction of point cloud models suitable for most buildings. \({P}_{{Scale}}\) is defined as the comprehensive distance between each point in the point cloud, as shown in Fig. 2. Setting a point cloud set P as {\({P}_{0},{\,P}_{1},{\,P}_{2}\cdots {P}_{i}\cdots {P}_{k}\)}, the nearest neighboring points (\({P}_{n1}\), \({P}_{n2}\), \({P}_{n3}\)) is obtained for each point \({P}_{i}\) in the P. The average distance between \({P}_{i}\) and its nearest neighbors, \({P}_{n1}\), \({P}_{n2}\), and \({P}_{n3}\), is computed to determine \({P}_{{Scale}}\) of the corresponding point, as shown in Formula 1.

$${P}_{{Scale}}=\,\frac{1}{k}\mathop{\sum }\limits_{i=0}^{k}\frac{1}{3}\left[\sqrt{{\left({P}_{n1}-{P}_{i}\right)}^{2}}+\sqrt{{\left({P}_{n2}-{P}_{i}\right)}^{2}}+\sqrt{{\left({P}_{n3}-{P}_{i}\right)}^{2}}\right]$$

(1)

This figure quantitatively represents the intrinsic scale variations within the point cloud, providing a fundamental basis for subsequent analysis.

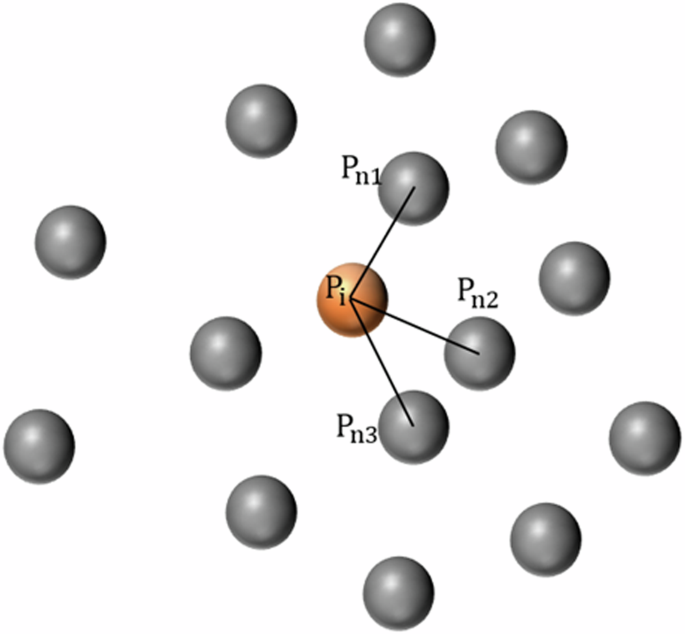

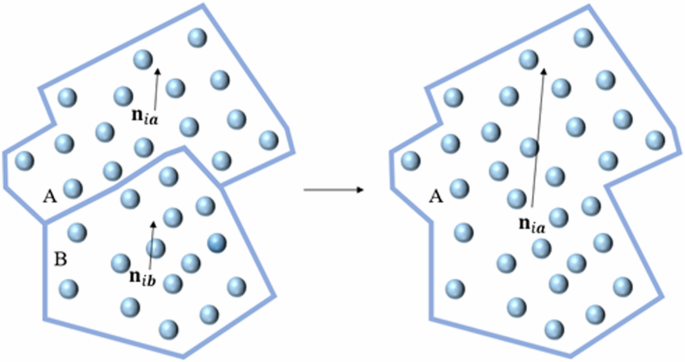

A region-growing-based point cloud segmentation clustering method is proposed to solve the problem of crucial structural information loss, as shown in Algorithm 1. Building 3D LPCD is a 3D dataset that comprises multiple planes. Hence, directly projecting the complete building 3D LPCD into a 2D image would result in data loss. The proposed method is divided into point cloud segmentation and subsequent clustering. First, the point cloud is sorted in ascending order based on curvature. Second, similarity metrics, such as the angle between normals, color information, and distance, are used to assess similarity between points, as shown in Formula 2. Finally, point cloud segmentation for building planes is accomplished by evaluating whether the distance and angle conform to predefined threshold values, as shown in Fig. 3.

$$\begin{array}{cc} & \arccos \left(\left|{{\bf{n}}}_{{\rm{i}}}^{\top }\cdot {{\bf{n}}}_{{\rm{j}}}\right|\right)\, < \,{\rm{\theta }},\\ & \left|{{\bf{Col}}}_{{\rm{i}}}-{{\bf{Col}}}_{{\rm{j}}}\right|\, < \,\mathrm{Colth},\\ & \parallel \overrightarrow{{{\bf{P}}}_{{\bf{i}}}{{\bf{P}}}_{{\bf{j}}}}\parallel < \,{\rm{t}}{\rm{h}},\end{array}$$

(2)

where \({{\bf{n}}}_{{\rm{i}}}\) and \({{\bf{n}}}_{{\rm{j}}}\) are the normals of the added points and the points under consideration, respectively; θ is the angle threshold set to 15°. \({{\bf{Col}}}_{{\rm{i}}}\) and \({{\bf{Col}}}_{{\rm{j}}}\) refer to the hue-saturation value of the added points and points under consideration, respectively, where H ranges from 0 to 255, and S and V range from 0 to 100. \({{\bf{P}}}_{{\rm{i}}}\) and \({{\bf{P}}}_{{\rm{j}}}\) are the coordinates of the added points and points under consideration, respectively. Colth is the color threshold with a value of 250, while th signifies the parallel distance threshold set to 30\({P}_{{Scale}}\).

Segmentation of point cloud data into distinct clusters, delineating structural boundaries for further quantitative analysis.

Algorithm 1 Region Growing

Input: thAngle: Threshold angle for normal deviation

1: pointData: 3D point cloud data

2: pcaInfos: PCA information for each point

Output: regions: List of detected regions (clusters)

3: thNormal ← cos(thAngle) ▷ Compute normal threshold

4: Sort points by curvature λ0 in ascending order

5:. for each point in sorted list do

6: if point is not used then

7: Initialize a cluster with the current point

8: for each neighboring point do

9: Compute normal deviation

10: if normal deviation ≥ thNormal then

11: Continue

12: end if

13: Compute distance and color deviation

14: if distance or color deviation ≥ threshold then

15: Continue

16: end if

17: Add point to the cluster and mark it as used

18: end for

19: end if

20: end for

21: return regions ▷ Return the detected regions

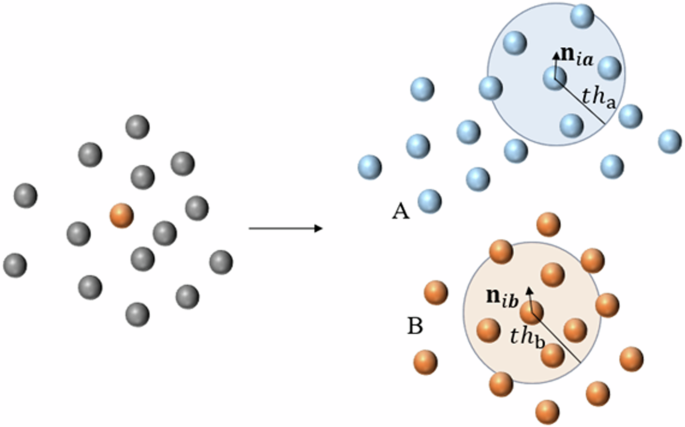

Strict control of the growth threshold is necessary during the point cloud segmentation process to ensure accuracy. As a result, over-segmentation occurs inevitably. A point cloud clustering strategy is proposed to mitigate the excessive fragmentation of planes, as shown in Algorithm 2. Adjacent point cloud planes with similar colors and normal vectors are merged. The region-growing-based point cloud clustering method employs similar principles and similarity metrics as segmentation but differs in transitioning from point growth to neighboring regions26. An initial seed region is selected, and neighboring regions resembling the seed region are subsequently incorporated, as shown in Fig. 4.

Clustering of point cloud data into homogeneous groups on the basis of spatial similarity, which facilitates the extraction of latent structural patterns for subsequent quantitative analysis.

Algorithm 2 Region Clustering

Input: thAngle: Threshold angle for plane deviation regions: Input regions

Output: regions: Merged regions

1: Step 1: Fit plane for each region using PCA i ∈ 1…regions.size()

2: Extract points for region i

3: Apply PCA to extract plane information for region i

4: Calculate average scale scaleAvg and update patches[i].scale

5: Step 2: Label each point with its region i ∈ 1…regions.size()

6: Label each point in regions[i] with its region index

7: Step 3: Find adjacent patches i ∈ 1…patches.size() neighboring point in patches[i]

8: Check if the neighboring point belongs to an adjacent patch

9: if true then

10: Add it to the list of adjacent patches for patches[i]

11: end if

12: Step 4: Merge adjacent patches i ∈ 1…patches.size()

13: if patch i is not merged then

14: Start a new cluster with patch i

15: while not all adjacent patches processed do adjacent patch

16: Check if merge conditions (angle, distance, color) are met

17: if conditions are met then

18: Add patch to the cluster

19: end if

20: if cluster size exceeds thRegionSize then

21: Stop merging

22: end if

23: end while

24: end if

25: return regions

The point cloud clustering strategy mainly involves three steps.

-

(1)

Fitted planes are computed for segmented point clouds along with normal vectors, colors, and distribution scale. Then, a label is assigned to each point within the respective region, thereby facilitating subsequent indexing.

-

(2)

Based on the point cloud labels, the neighboring regions of each plane region are retrieved, (i.e., regions with inconsistent labels of adjacent point clouds). Subsequently, the suitability of neighboring regions for clustering is determined based on a predefined similarity criterion. The point cloud regions that meet clustering requirements are merged and unified labels.

-

(3)

The steps are performed repeatedly and iteratively until no region in the dataset meets the clustering requirements.

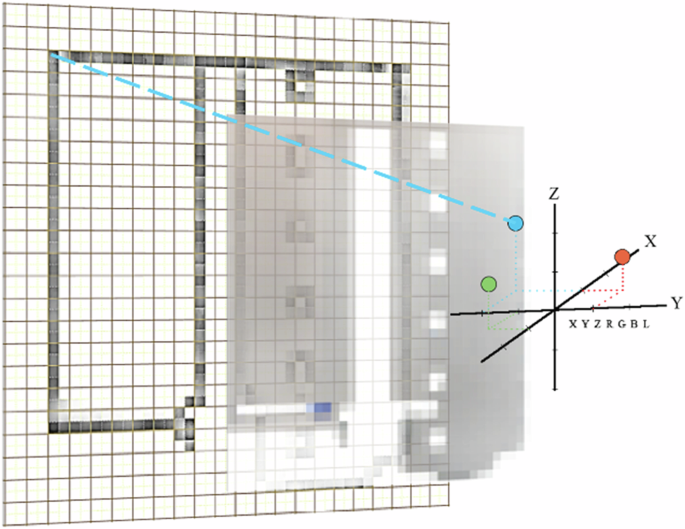

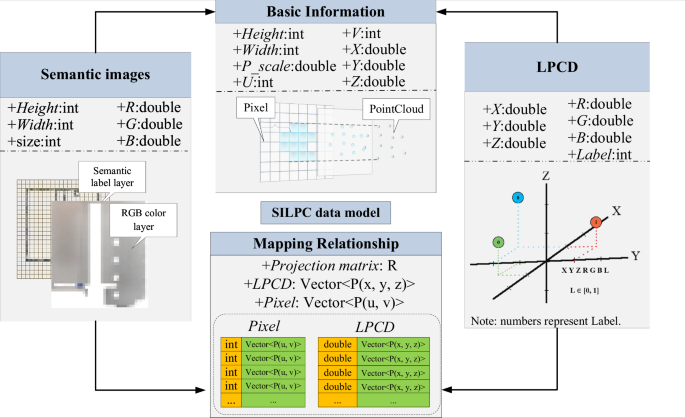

The semantic image is a 2D image that contains semantic information created by projecting the point cloud onto their planes. The image consists of RGB color and semantic label layers, shown in Fig. 5. To generate a semantic image, a projection strategy is used to map clustered point cloud planes onto a 2D image by establishing a correspondence between 3D spatial coordinates and 2D pixels. The projection strategy mainly involves three steps. First, the points that belong to each plane are projected onto the corresponding plane. Second, the grid method is used to transform the 2D coordinates into pixel coordinates. The grid width is determined by the max value at the point cloud scale within the corresponding plane, as shown in Formula (3).

$$\begin{array}{c}{u}_{i}{\rm{\& }}=\left[\left({x}_{i}-{x}_{min}\right)/{S}_{max}\right],\\ {v}_{i}{\rm{\& }}=\left[\left({y}_{i}-{y}_{min}\right)/{S}_{max}\right].\end{array}\,S=\left\{{P}_{{Scale\; i}},i\in {N}^{* }\right\}$$

(3)

where \({u}_{i}\) and \({v}_{i}\) are the pixel coordinates, and P (\({x}_{i},\,{\,y}_{i}\)) is the 2D coordinate. P (\({x}_{min},\,{\,y}_{{m}{i}{n}}\)) is the minimum point within the 2D coordinate system. \({S}_{\max }\) is the max value at the point cloud scale.

Semantic annotation of image data into distinct class-specific regions, delineating categorical boundaries for subsequent quantitative analysis.

Finally, the semantic image is created by merging the RGB color and semantic label layers using the semantic labels and RGB color properties of the point cloud. Furthermore, the point cloud, semantic image, and projection matrix are recorded to construct the SILPC model, as shown in Fig. 6.

The SILPC data model describes the integrative architecture of the multi-dimensional data and articulates the relationships between the key components for a robust quantitative evaluation.

Facade structure extraction based on point cloud model

An extraction method called SemColorED is introduced to detect and extract facade structures from the SILPC model. The SemColorED method is improved from the ColorED detector24, which is augmented with an expanded input interface to incorporate semantic information within the decision-making framework of the algorithm. Through the ColorED detector, the relevance of the extracted edge pixels is determined based on the semantic label layer of the image. Only the pixels within the semantic range and those that meet the predefined threshold are kept, as shown in Fig. 7. The distance threshold for finding the nearest labeled point in the semantic layer to a given target pixel is dynamically adjusted based on \({P}_{{Scale}}\).

a Located in the semantic range; b Outside the semantic range.

The proposed method involves three key steps. (1) Construction of the semantic image index. A kd-tree index is constructed using the semantic label and RGB color layers of the point cloud model. (2) Extraction of facade structure point cloud. The ColorED detector extracts edge pixels from each pixel in the RGB color layer. Then, a thorough search is conducted to determine which pixels are adjacent to the semantic regions corresponding to building facades. (3) Fitting of structural line segments. The edge pixels are subjected to a fitting process to create facade line segments. Moreover, the projection matrix is used to restore the 3D structure from the 2D facade in the model.

Extraction structure optimization based on morphological feature

To improve the accuracy of extracting facade structures, an optimization method based on the morphological characteristics of building facades is proposed. This method mitigates the adverse effects of scattered and noisy line segments, which are short and inconsistent with the main direction. The optimization process consists of two parts. (1). Scattered line segments are merged and consolidated by considering vectors and distances between them. (2). A noise line segment filtering method is proposed to filter out noise line segments that deviate from the prominent structural direction of the building based on the primary orientation.

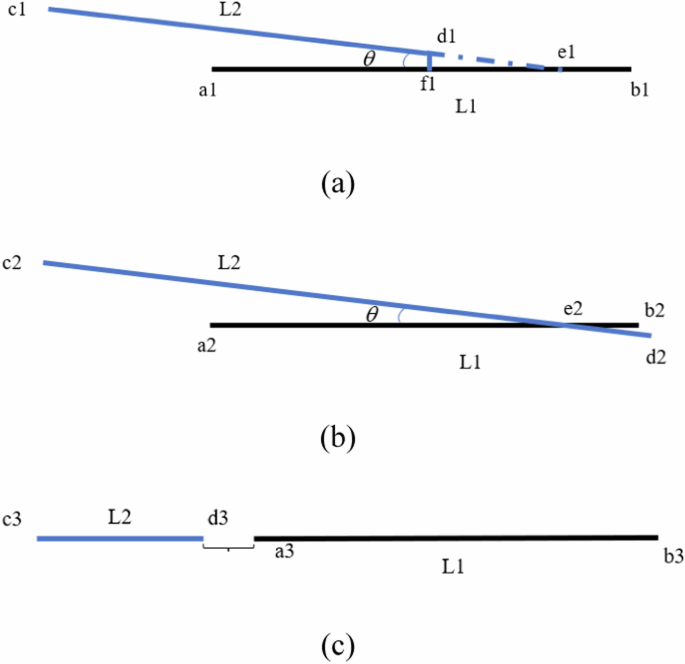

Three scenarios must be assessed to determine whether scattered structural line segments need optimizing, as shown in Fig. 8. (1). The vectors are approximately parallel, meet the length threshold, and the extension of one line segment intersects with another. (2). The line segments intersect, and the vectors are approximately parallel with a suitable distance. (3). The vectors are parallel, and the spaces meet the length threshold. The approximate parallel angle threshold is set at 10°, and the distance threshold adjusts dynamically based on \({P}_{{Scale}}\) variable.

Presentation of three different assessment scenarios, each representing a specific scene condition. Where (a), (b), and (c) corresponds in turn to cases of type (1), (2), and (3) above.

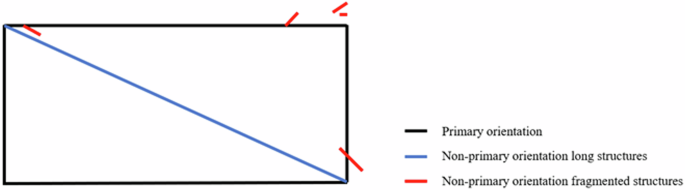

Most buildings are constructed with a rectangular structure supplemented by triangular frameworks to ensure structural stability. Therefore, the noise line segment filtering method designates parallel structural vectors as one category. In contrast, the two categories with the highest quantity and mutually perpendicular vector directions are assigned as the primary building orientation. The building structure lines are divided into primary orientation, non-primary orientation long structures, and non-primary orientation fragmented structures (noisy line segments), as shown in Fig. 9. An optimized filtering of extraction results is accomplished by removing fragmented non-primary orientation structures. The line segment threshold adjusts dynamically with \({P}_{{Scale}}\).

Building structure lines categorized by orientation: primary orientation (black), non-primary long structures (blue) and non-primary fragmented structures (red).

Evaluation metrics

The extraction results of building facade structures can be categorized into two distinct scenarios: accurate extraction and inaccurate extraction. A binary classification is used to represent the extraction results. Positive results are labeled as 1 and negative as 0. Table 1 shows four distinct states based on the truth and extraction values of the final results.

Three metrics, namely, Accuracy, Recall, and Integrities, are used to evaluate the facade extraction results based on the Confusion matrix.

(1) Accuracy refers to the ratio of correctly extracted values to the total number of values, comprehensively evaluating the overall extraction correctness for all extracted values.

$${Accuracy}=\frac{{TP}}{{TP}+{FP}}$$

(4)

(2) Recall corresponds to the ratio of correctly extracted values to the total number of true values, a statistical measure to evaluate the effectiveness of building structure extraction.

$${Rec}{all}=\frac{{TP}}{{TP}+{FN}}$$

(5)

(3) Integrities is the ratio between the accurately extracted structural line length and the true length, which is a crucial metric for evaluating the completeness of facade structure line extraction.

$$I{ntegrities}=1-\frac{\left|{L}_{{total}}\times {Accuracy}-{L}_{{true}}\right|}{{L}_{{true}}}$$

(6)

where \({L}_{{total}}\) is the total length of line segments obtained through the facade extraction method, \({L}_{{true}}\) is the true length of manually extracted building facade structures.

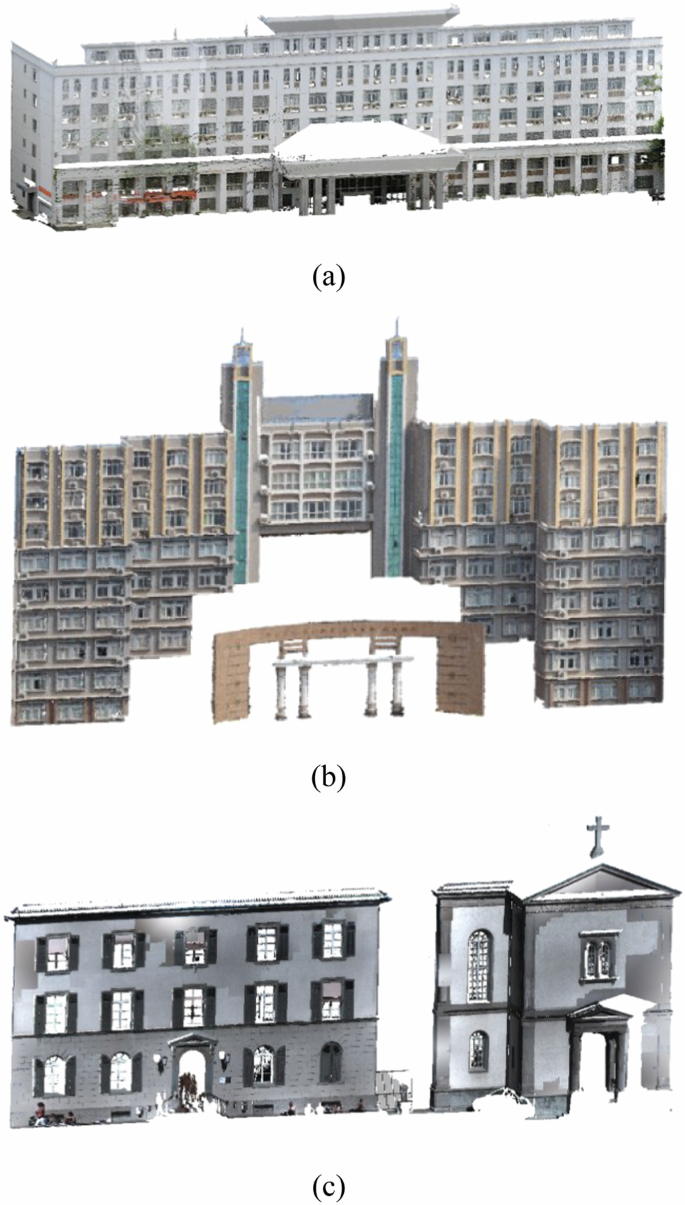

Data set

Three groups of 3D building LPCD are used as test data, as shown in Fig. 10. Groups 1 and 2 are collected by the RIEGL VZ-1000 3D laser scanner in JXUST. Group 1 data are collected by setting up scanners on the west side of the CSMECB, which is colored based on the transformation matrix obtained through the registration process between the scanner and the camera. The CSMECB contains mostly regular line segment facade structures, such as rectangular doors, windows, cylindrical pillars, and awnings. Group 2 data are collected by setting up a scanner in the south direction of the Administration Building, which is colored based on the transformation matrix calculated through manually selecting points. Issues, such as inaccurate color positioning and texture deformation in CSMECB, serve as a practical test to validate the robustness of the proposed method. Group 3 data from the open-source data set called Stgallen3 is a small building with multiple complex structures of line and curve segments25. Details of the test data are shown in Table 2.

a Group 1: CSMECB; b Group 2: Administration Building; c Group 3: Stgallen3.

link